In terms of estimating how good we’re at one thing, analysis persistently exhibits that we are inclined to fee ourselves as barely higher than common. This tendency is stronger in individuals who carry out low on cognitive exams. It is referred to as the Dunning-Kruger Impact (DKE): The more serious individuals are at one thing, the extra they have a tendency to overestimate their skills, and the “smarter” they’re, the much less they notice their true skills.

Nonetheless, a examine led by Aalto College reveals that on the subject of AI, particularly, giant language fashions (LLMs), the DKE does not maintain, with researchers discovering that every one customers present a big incapacity to evaluate their efficiency precisely when utilizing ChatGPT. In reality, throughout the board, folks overestimated their efficiency. On high of this, the researchers recognized a reversal of the Dunning-Kruger Impact––an identifiable tendency for these customers who thought-about themselves extra AI literate to imagine their skills had been better than they actually had been.

“We discovered that on the subject of AI, the DKE vanishes. In reality, what’s actually shocking is that larger AI literacy brings extra overconfidence,” says Professor Robin Welsch. “We’d count on people who find themselves AI literate to not solely be a bit higher at interacting with AI methods, but additionally at judging their efficiency with these methods—however this was not the case.”

The discovering provides to a quickly rising quantity of analysis indicating that blindly trusting AI output comes with dangers like “dumbing down” folks’s skill to supply dependable info and even workforce de-skilling. Whereas folks did carry out higher when utilizing ChatGPT, it is regarding that all of them overestimated that efficiency.

“AI literacy is actually necessary these days, and due to this fact it is a very hanging impact. AI literacy is likely to be very technical, and it is probably not serving to folks truly work together fruitfully with AI methods,” says Welsch.

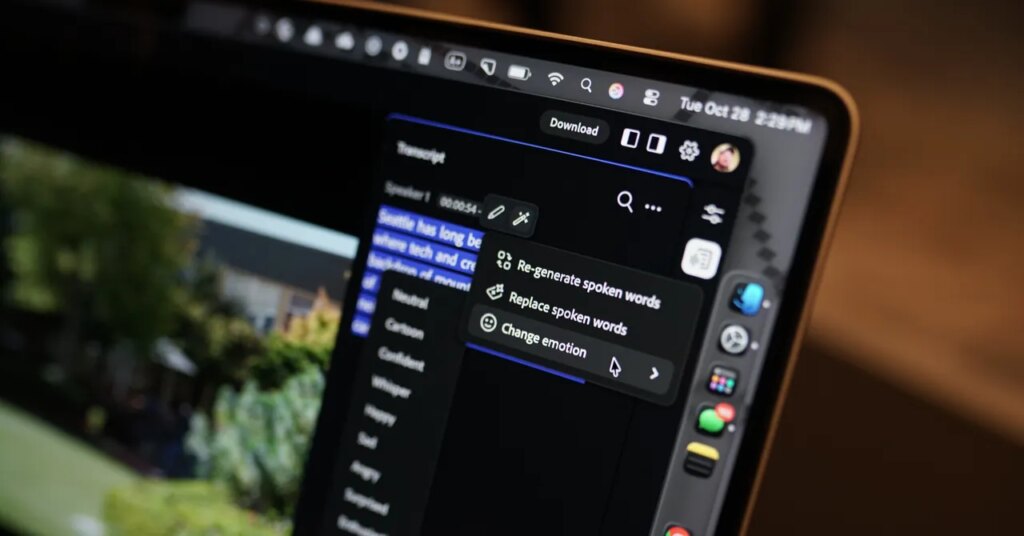

“Present AI instruments should not sufficient. They aren’t fostering metacognition [awareness of one’s own thought processes] and we aren’t studying about our errors,” provides doctoral researcher Daniela da Silva Fernandes. “We have to create platforms that encourage our reflection course of.”

The article seems in Computer systems in Human Conduct.

Why a single immediate is just not sufficient

The researchers designed two experiments the place some 500 individuals used AI to finish logical reasoning duties from the US’s well-known Legislation College Admission Check (LSAT). Half of the group used AI and half did not. After every process, topics had been requested to observe how properly they carried out, and in the event that they did that precisely, they had been promised further compensation.

“These duties take plenty of cognitive effort. Now that folks use AI each day, it is typical that you’d give one thing like this to AI to resolve, as a result of it is so difficult,” Welsch says.

The information revealed that the majority customers not often prompted ChatGPT greater than as soon as per query. Usually, they merely copied the query, put it within the AI system, and had been proud of the AI’s resolution with out checking or second-guessing.

“We checked out whether or not they actually mirrored with the AI system and located that folks simply thought the AI would resolve issues for them. Often there was only one single interplay to get the outcomes, which implies that customers blindly trusted the system. It is what we name cognitive offloading, when all of the processing is completed by AI,” Welsch explains.

This shallow degree of engagement could have restricted the cues wanted to calibrate confidence and permit for correct self-monitoring. Due to this fact, it is believable that encouraging or experimentally requiring a number of prompts may present higher suggestions loops, enhancing customers’ metacognition, he says.

So what is the sensible resolution for on a regular basis AI customers?

“AI may ask the customers if they’ll clarify their reasoning additional. This might pressure the person to have interaction extra with AI, to face their phantasm of information, and to advertise essential pondering,” Fernandes says.

Extra info:

Daniela Fernandes et al, AI makes you smarter however none the wiser: The disconnect between efficiency and metacognition, Computer systems in Human Conduct (2026). DOI: 10.1016/j.chb.2025.108779

Quotation:

AI use makes us overestimate our cognitive efficiency, examine reveals (2025, October 28)

retrieved 29 October 2025

from https://techxplore.com/information/2025-10-ai-overestimate-cognitive-reveals.html

This doc is topic to copyright. Aside from any truthful dealing for the aim of personal examine or analysis, no

half could also be reproduced with out the written permission. The content material is supplied for info functions solely.