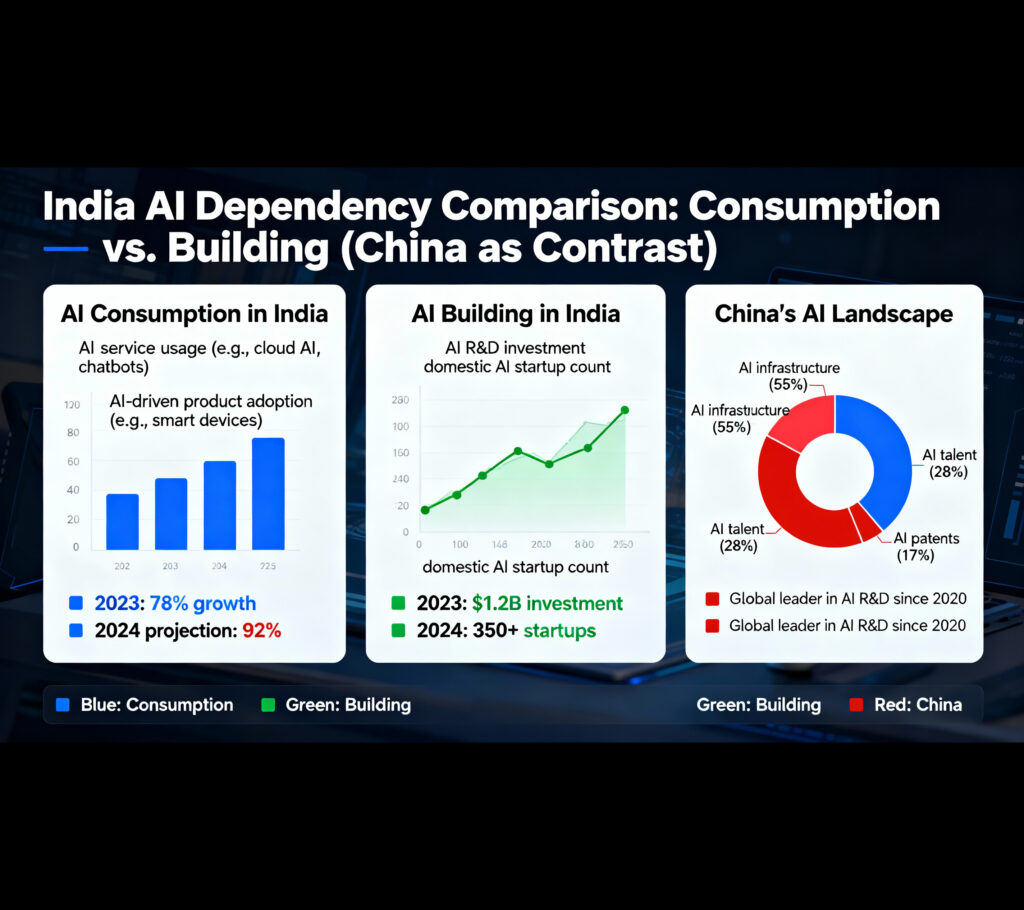

India is adopting AI faster than ever—but most of the intelligence powering that adoption isn’t Indian. Despite being the country that built UPI, exports software globally, and produces world-class AI talent, India is using more AI than building the infrastructure beneath it.

The Foreign AI Expansion

American AI companies are aggressively expanding into India. OpenAI launched ChatGPT Go at ₹399 per month and made the service free for Indian users, targeting what executives call the world’s second-largest AI market. Google announced a $15 billion investment over five years to build an AI infrastructure hub in southern India. Microsoft committed $3 billion to expand cloud computing and AI capabilities, while Amazon plans to invest $12.7 billion by 2030. OpenAI is also in discussions to build a massive data center with at least one gigawatt capacity.

Meanwhile, India’s first sovereign foundational model—trained on Indian datasets for Indian use cases—is expected by February 2026. While ambitious, this timeline places India 18 months behind South Korea and nearly five years after China’s first domestic models. The government has deployed 38,000 GPUs against an initial target of 10,000 units and is supporting 12 domestic firms to develop foundational models.

Understanding Sovereign AI

Sovereign AI addresses a fundamental question: who decides what AI in your country knows, how it behaves, and whose interests it serves? For a country to claim AI sovereignty, it needs three interconnected components:

Compute and infrastructure: Data centers, chips, and power supply required to train and serve large models. India remains predominantly software-driven, and industry leaders acknowledge that hardware must catch up.

Foundational models: Models built for local languages and cultural nuances, not merely copies of global systems. India’s Bharat GPT initiative supports 22 Indian languages but operates at a fraction of the scale required to compete globally.

Large-scale national use cases: Clear deployment across commerce, search, logistics, and content platforms that ensure continuous domestic improvement.

The China Case Study

China’s pursuit of AI sovereignty reveals both progress and persistent challenges. Beijing has championed domestic AI development, with dozens of models from companies like Alibaba (Qwen), Baidu (Ernie), DeepSeek, and Huawei. Chinese open-source models have gained significant global traction—Alibaba’s Qwen became the most downloaded model on Hugging Face, and by 2025, Chinese models overtook US counterparts in cumulative downloads by developers.

However, beneath this apparent success lies a critical dependency. Despite having domestic alternatives, many Chinese companies still rely on or prefer US foundational models for high-value tasks because domestic models lag several years behind in performance. Chinese researchers identify two primary constraints: insufficient access to high-end chips due to US export restrictions, and the enormous cost of training frontier-scale models.

The chip dependency is particularly acute. US export controls have barred China from accessing Nvidia’s most advanced AI chips like the H100 and newer Blackwell series. China runs many models on older, less capable GPUs, which limits performance and widens the capability gap over time. Chinese tech giants stockpiled Nvidia chips before restrictions tightened, and companies like Huawei, Alibaba, and Baidu are developing domestic alternatives such as Huawei’s Ascend processors.

Yet the reality remains sobering. When DeepSeek attempted to train its R2 model on Huawei’s Ascend chips in 2025, the company encountered persistent technical failures and was forced to revert to Nvidia hardware for training, while trying to use Huawei chips only for the less demanding inference tasks. China Telecom successfully trained models on domestic chips, but these remain less powerful than Western alternatives.

A venture capital partner noted that approximately 80% of AI entrepreneurs he encounters in the US are using Chinese open-source models—not because of technological superiority, but because of dramatically lower costs. Moonshot AI’s Kimi K2 reportedly cost just $4.6 million to train, a fraction of what OpenAI spends.

The takeaway is stark: even with massive scale, state funding, and industrial coordination, sovereign AI is extraordinarily difficult. China possesses the world’s strongest industrial machinery yet still lacks full autonomy. Export controls have limited China’s ability to deploy AI infrastructure at scale, even as it produces competitive models.

India’s Challenge

India faces similar vulnerabilities but from a weaker starting position. The vacuum created by slow domestic development has been rapidly filled by foreign AI companies. When foreign models become too entrenched, domestic ecosystems find it nearly impossible to catch up—precisely what China is experiencing, where companies quietly prefer US models because they perform better.

India has ambition, talent, a massive user base, and political will. What it lacks is the full stack—particularly compute infrastructure. Training even a mid-sized large language model requires tens of thousands of high-performance GPUs, which India does not currently possess at scale. Analysts describe this as the “compute paradox”: India is a global AI consumer but not an AI training hub.

The government’s February 2026 target for a sovereign model is significant but arrives after foreign models have already dominated Indian use cases. Global platforms shape how millions of Indians work, learn, and search. The country risks becoming a world-leading market for AI without being a world-leading builder of it.

Building sovereign AI requires long-term, industrial-scale investment. The critical question is whether India can build fast enough before foreign models become the default operating system for everything Indians do online. The gap between building AI for India versus building AI by India represents the difference between sovereignty and dependence.

India has the right narrative, talent, and digital infrastructure. But sovereign AI is not a narrative—it is a capacity. And capacity requires the kind of sustained commitment that both enables and constrains nations like China in their pursuit of technological independence.