Google introduced Gemini 3 Flash on Wednesday in an appeal to enterprises that want to use Gemini 3 without incurring high costs. The new model shows Google making use of its current popularity with enterprise customers, but it’s also another example of how model makers like Google are providing cheaper models that are on par with their frontier models.

Gemini 3 Flash adds to a much-anticipated family of models that was introduced last month with Gemini 3 Pro and Gemini 3 Deep Think. Flash employs the same type of reasoning as Gemini 3 Pro, but uses fewer tokens to complete everyday tasks. It can also adapt its thinking based on the use case for which it’s being used, Google said.

And it comes at a cheaper price. For paid users, Gemini 3 Flash costs $0.50 per million tokens input for text, image and video, and $1 per million tokens for audio input. Outputs are $3 per one million tokens. Comparatively, Gemini 3 Pro ranges between $2 and $4 per one million tokens for inputs. Output prices range between $12 to $18 per one million tokens.

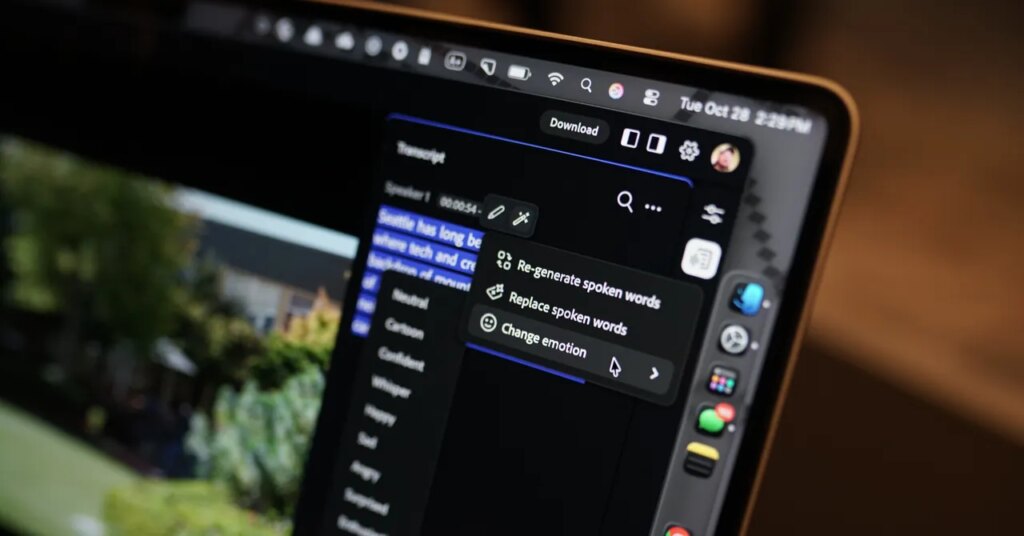

The model, which will replace Gemini 2.5 Flash in the Gemini App, achieves the same level of coding as Pro with low latency, the cloud provider stated. Like the other models in the Gemini 3 family, Flash is suitable for tool use and multimodal capabilities. Some use cases for the model include video analysis and data extraction.

“Since its launch, Gemini 3 has become a lot more top of mind for developers who are looking for multimodal experiences,” said Lian Jye Su, an analyst at Omdia, a division of Informa TechTarget. “We are seeing Google becoming a lot more capable in delivering state-of-the-art multimodal AI experiences.”

Gemini 3 Flash demonstrates Google’s efforts to meet the needs of many enterprises when determining which model best suits their use case, considering factors such as accuracy, response quality, cost and speed, according to analysts.

While some use cases might require the use of the Gemini Pro model, the majority can rely on the Flash model, said Arun Chandrasekaran, an analyst at Gartner.

“It’s not like you’re getting an inferior model at a much lower cost,” Chandrasekaran said. “It’s just maybe there are some tough reasoning-oriented use cases where you will use the Pro model, but for a lot of other tasks, the Flash would offer a perfect balance between the performance versus the speed and the cost.”

He added that this aligns with a broader strategy among model providers, which is to “abstract a lot of details away from the user.”

In other words, model makers want to reach a point where users cannot determine what model will answer their query unless they make an explicit selection, Chandrasekaran said.

“It’s also a way for [model makers] to lower their own costs,” he said. “If they can deliver more responses from a lower cost model, of course they will do that.”

While offering a lower-cost model for enterprises provides an option, Google will face a challenge in getting users to choose between the Pro and the Flash.

“You’d better have excellent talking points and swim lanes between how you offer this,” Chandrasekaran said. “That’s always going to be the challenge, given the insignificant differences at some level between these models.”